Authors:

(1) Xian Liu, Snap Inc., CUHK with Work done during an internship at Snap Inc.;

(2) Jian Ren, Snap Inc. with Corresponding author: [email protected];

(3) Aliaksandr Siarohin, Snap Inc.;

(4) Ivan Skorokhodov, Snap Inc.;

(5) Yanyu Li, Snap Inc.;

(6) Dahua Lin, CUHK;

(7) Xihui Liu, HKU;

(8) Ziwei Liu, NTU;

(9) Sergey Tulyakov, Snap Inc.

Table of Links

3 Our Approach and 3.1 Preliminaries and Problem Setting

3.2 Latent Structural Diffusion Model

A Appendix and A.1 Additional Quantitative Results

A.2 More Implementation Details and A.3 More Ablation Study Results

A.5 Impact of Random Seed and Model Robustness and A.6 Boarder Impact and Ethical Consideration

A.7 More Comparison Results and A.8 Additional Qualitative Results

A.7 MORE COMPARISON RESULTS

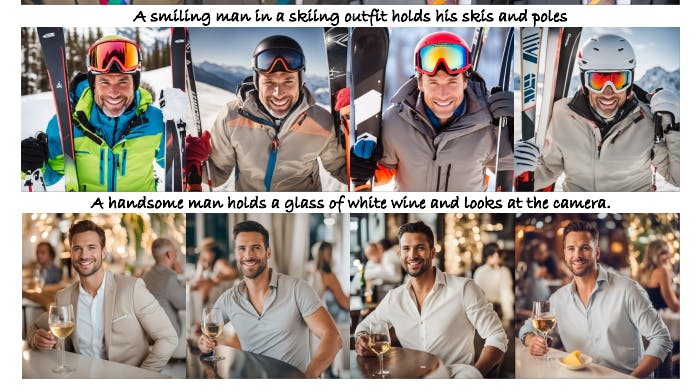

We additionally compare our proposed HyperHuman with recent open-source general text-toimage models and controllable human generation baselines, including ControlNet (Zhang & Agrawala, 2023), T2I-Adapter (Mou et al., 2023), HumanSD (Ju et al., 2023b), SD v2.1 (Rombach et al., 2022), DeepFloyd-IF (DeepFloyd, 2023), SDXL 1.0 w/ refiner (Podell et al., 2023). Besides, we also compare with the concurrently released T2I-Adapter+SDXL[1]. We use the officially-released models to generate high-resolution images of 1024 × 1024 for all methods. The results are shown in Fig. 6, 7, 8, and 9, which demonstrates that we can generate text-aligned humans of high realism

A.8 ADDITIONAL QUALITATIVE RESULTS

We further inference on the challenging zero-shot MS-COCO 2014 validation human subset prompts and show additional qualitative results in Fig. 10, 11, and 12. All the images are in high resolution of 1024 × 1024. It can be seen that our proposed HyperHuman framework manages to synthesize realistic human images of various layouts under diverse scenarios, e.g., different age groups of baby, child, young people, middle-aged people, and old persons; different contexts of canteen, in-the-wild roads, snowy mountains, and streetview, etc. Please kindly zoom in for the best viewing.

This paper is available on arxiv under CC BY 4.0 DEED license.

[1]https://huggingface.co/Adapter/t2iadapter